Highlights

- While ChatGPT is made for conversational AI, Auto-GPT is made for creating automated content.

- While ChatGPT generates text in response to a prompt, Auto-GPT does it in reaction to a discussion history.

- ChatGPT is better at natural language processing and can carry on more intelligible conversations than Auto-GPT, which is better suited for tasks like text completion.

The creation of several language models has advanced with the advancement of artificial intelligence. The two most popular models are ChatGPT and Auto-GPT. They may have certain things in common, yet they may differ significantly.

This article will analyze the differences between these two models and go in-depth into how they function.

Don’t want to miss the best from TechLatest?

Set us as a preferred source in Google Search

and make sure you never miss our latest.

This post will give you helpful insights into the distinctive features of AutoGPT and ChatGPT, whether you’re a seasoned AI expert or just starting started. Let’s dive into the article.

Auto-GPT and ChatGPT, How do they Differ?

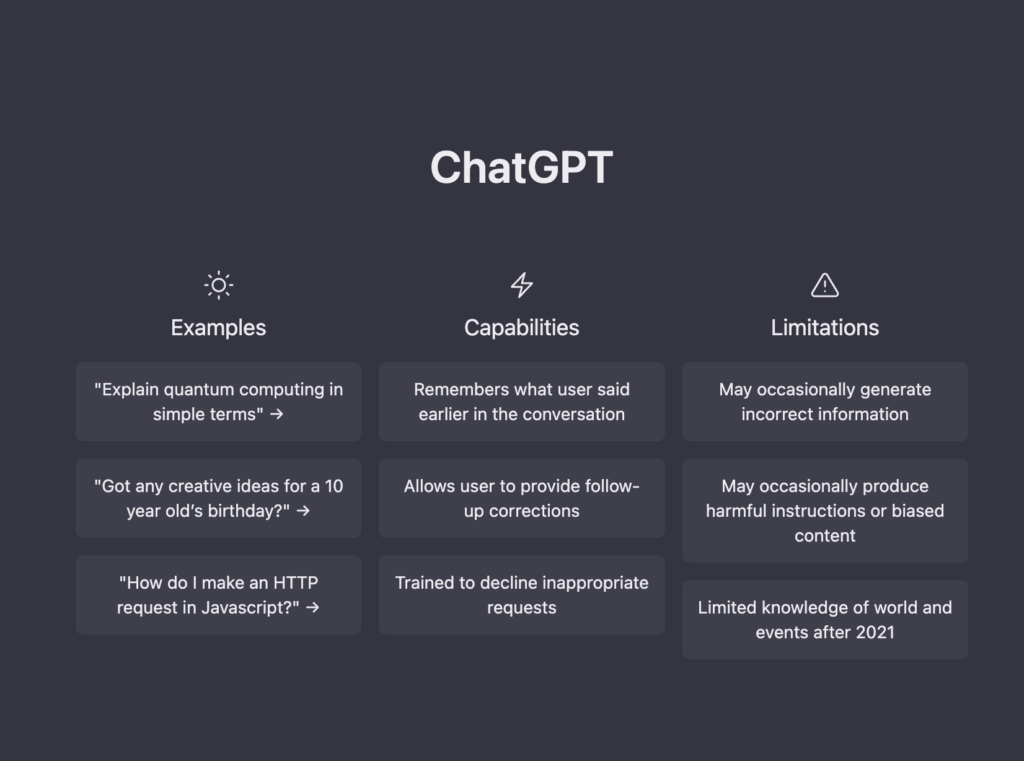

While both AutoGPT and ChatGPT are natural language processing (NLP) models created by OpenAI, they have different roles and objectives.

For automatic text creation activities, including text completion, summarization, and translation, Auto-GPT was created.

It is a subset of the wider GPT family of models, which use a ton of text data and vast train on to discover patterns and connections between words and sentences.

Unsupervised learning approaches are specifically used to train Auto-GPT, which learns from the data without any explicit labeling or annotation.

On the other hand, ChatGPT is a conversational AI model that is intended for communication that is as natural as possible. It is created to comprehend natural language questions and provide conversational responses. It is trained on a vast corpus of human-written dialogues.

The GPT architecture of ChatGPT is identical to that of Auto-GPT; however, ChatGPT is improved by utilizing supervised learning methods, which entail feeding the model labeled training data.

The relative training data sets used by Auto-GPT and ChatGPT are one of their key distinctions. While ChatGPT is trained solely on conversational data, Auto-GPT is trained on a wide variety of text data, including news articles, novels, and web pages. The capabilities of the two models are significantly impacted by this disparity in training data.

For tasks requiring the creation of coherent and contextually suitable text in response to a given prompt, Auto-GPT is an excellent choice. It may be used, for instance, to create summaries of lengthy articles, whole phrases, or paragraphs, or to translate text across languages.

It may produce writing that is extremely context-aware and can retain coherence across longer lengths of text since it has been trained on a varied variety of text data.

Contrarily, ChatGPT was created primarily for dialogue exchanges. It excels in comprehending and producing natural language answers to queries and assertions.

It is particularly skilled at comprehending the subtleties of human language since it has been trained on conversational data, and it may provide replies that are more human-like in tone and style. This makes ChatGPT ideal for use in chatbots, virtual assistants, and customer service interactions, among other uses.

Table of Differences on “How is Auto-GPT Different from ChatGPT?”

| Auto-GPT | ChatGPT | |

|---|---|---|

| Usecase | Trained in a diverse range of data | Generates text on any topic or subject |

| Fine-tuning | Fine-tuning is necessary | Fine-tuning is optional but can improve performance |

| Training Data | Trained on a specific domain | Narrower use cases, such as completing forms or answering FAQs |

| Control over output | Limited control over output | Trained in a diverse range of data |

| Model size | Smaller model size | Larger model size |

| Customization | Customization is limited | Customization is flexible and extensive |

| API | Available through OpenAI API | Available through OpenAI API and various cloud providers |

| Use-cases | Narrower use-cases such as completing forms or answering FAQs | Narrower use cases such as completing forms or answering FAQs |

How to Use Auto-GPT?

Step 1: Choose a program Language and Task:

The initial step is to decide on the programming language and task you wish to use to produce the code for. Several programming languages, including Python, JavaScript, Ruby, and PHP, are supported by AutoGPT.

A wide range of projects is also available, including web development, machine learning, data analysis, and more.

Step 2: Install the Auto-GPT library:

To use the tool, you must first install the AutoGPT library. Run the following command in your terminal to accomplish this: pip install autogpt

Step 3: Import the Auto-GPT Library:

The AutoGPT library may be imported into your script by adding the following line at the start of the script once it has been installed: import autogpt

Step 4: Establishing the Auto-GPT model:

The Auto-GPT model has to be configured next. By making an instance of the “AutoGPT” class and indicating the programming language and task you wish to create code for, you may do this.

For instance, you would use the following code to build up the model for producing Python code for data analysis: model = autogpt.AutoGPT(lang=”python”, task=”data-analysis”)

Step 5: Create code:

Once the model is configured, you may create code by using the ‘generate()’ function with a prompt that specifies the code you wish to create.

For instance, you could use the following prompt to build Python code for reading a CSV file: code = model.generate(“Read CSV file in Python”)

A string containing the created code will be returned by the ‘generate()‘ function.

Using the produced code will allow you to complete your project. The code may be imported into your project by saving it to a file or by copying and pasting it into your script.

In conclusion, utilizing Auto-GPT is simple and uncomplicated. Even if you are not an experienced programmer, you may quickly and simply write high-quality code by adhering to these straightforward guidelines.

Wrapping it All

In conclusion, the GPT language model has two variants: Auto-GPT and ChatGPT, each of which has a specific use.

While ChatGPT is a conversational agent created to have human-like conversations, AutoGPT is a task-specific model that focuses on producing automated content for a variety of applications, such as translation and summarization.

Even though both models use the same architecture and are trained on enormous quantities of data, the unique training goals and intended uses cause them to operate and function very differently.

Overall, these two models show the GPT architecture’s strength and adaptability as well as its potential to change several areas of natural language processing.

Be sure to sign up for our newsletter if you’re interested in staying current with language modeling and AI advances.

You’ll get frequent updates on brand-new studies, innovative applications, and perceptions of the most recent trends in the industry. Take advantage of this chance to keep current and in the know.

Further Reading:

Enjoyed this article?

If TechLatest has helped you, consider supporting us with a one-time tip on Ko-fi. Every contribution keeps our work free and independent.

Support on Ko-fiDirectly in Your Inbox